- Blog

- Game of thrones season 8 episode 1 online free

- King arthur pendragon rpg other classes

- Dogz 5 windows 7 patch

- Java idl

- Nuance vocalizer save as mp3

- How to use srs audio sandbox

- These evil cats wont let me feed my babies ii ratty catty

- Nidhogg pronunciation

- Why is excel linear regression not lineawr

- Beyonce best thing i never had meme

- Rumor lee brice lyrics

- 2-4 g vs 5g

- Aho compiler design

- How to activate windows 8-1 pro product key

- Paper mario the thousand year door rom

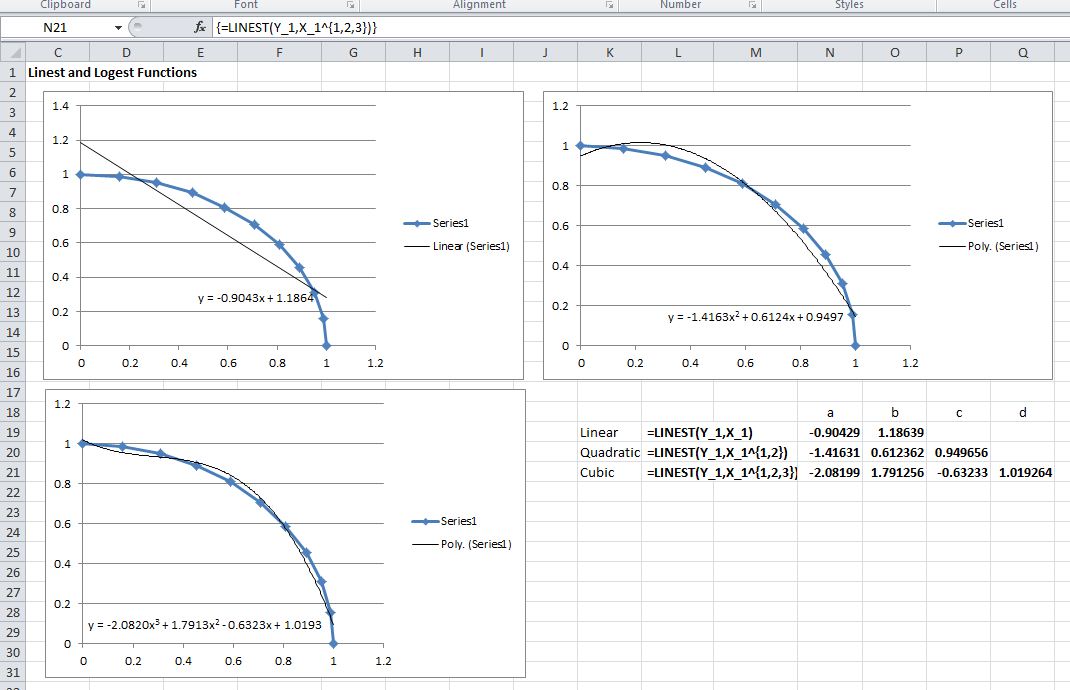

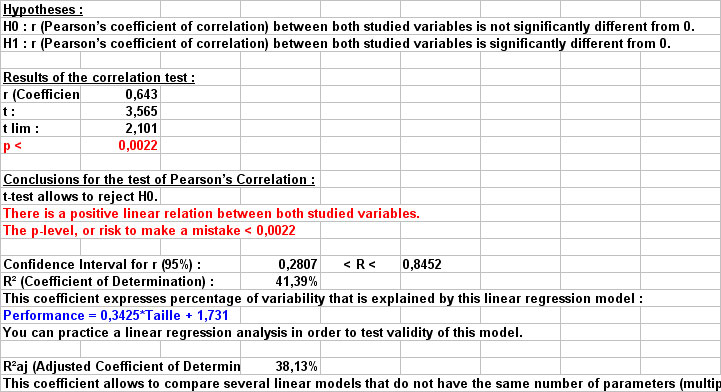

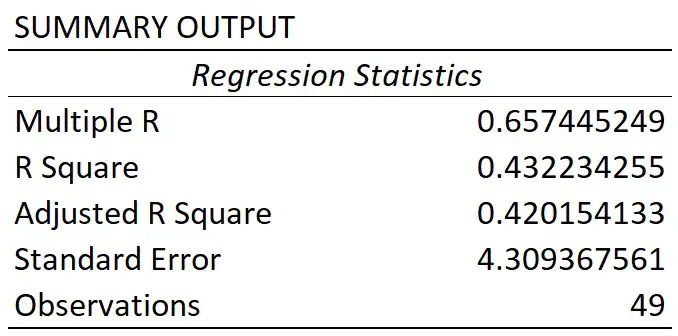

These are some of the reasons we run regressions, Excel has a built-in solver feature which does just that, but I would like to build some macros that can also perform regressions, sort of like deconstructing and rebuilding an engine to truly understand how it works. Maybe you have to explain some phenomenon and predict it, but you don’t have a clue how it works or why it does what it does. Maybe you need to do some forecasting, maybe you want to tease out a relationship between two (or more) variables. Let’s add 10 more customers age between 60 to 70, and train our linear regression model, finding the best fit line.So, you want to run a linear regression in Excel. Neural networks may as well be called “yoga networks” - their special power is giving you a very flexible boundary. RMSE measure how far the observed data points are to the model’s predicted values, the lower the better.įrom the metrics, logistic regression performed much better than linear regression in classification tasks. But R² alone is not enough, so we look at RMSE as well. R² is a measure of how closely the observed data points are to the fitted regression line, generally the higher the better. | | R2 (higher better) | RMSE (lower better) | Let’s see how logistic regression classifies our dataset. Yes, it might work, but logistic regression is more suitable for classification task and we want to prove that logistic regression yields better results than linear regression. But sure, we can limit any value greater than 1 to be 1, and value lower than 0 to be 0. Using our linear regression model, anyone age 30 and greater than has a prediction of negative “purchased” value, which don’t really make sense. But in linear regression, we are predicting an absolute number, which can range outside 0 and 1. Probability is ranged between 0 and 1, where the probability of something certain to happen is 1, and 0 is something unlikely to happen. In a binary classification problem, what we are interested in is the probability of an outcome occurring. Problem #1: Predicted value is continuous, not probabilistic

:max_bytes(150000):strip_icc()/006-how-to-run-regression-in-excel-4690640-d95adcb0c21f414db3d162701e04575f.jpg)

“Purchased” is a binary label denote by 0 and 1, where 0 denote “customer did not make a purchase” and 1 denote “customer made a purchase”. 10 customers age between 10 to 19 who purchased, and 10 customers age between 20 to 29 who did not purchase.

Let’s say we create a perfectly balanced dataset (as all things should be), where it contains a list of customers and a label to determine if the customer had purchased. Can classification problems be solved using Linear Regression? Examples 3 and 4 are examples of multiclass classification problems where there are more than two outcomes.

Why is excel linear regression not lineawr movie#

Given a set of movie reviews with sentiment label, identify a new review’s sentiment (from Kaggle).Given a set of images of cats and dogs, identify if the next image contains a dog or a cat (from Kaggle).

That is a task of learning from the examples in a training dataset, by mapping input variables to the outcome labels, which then can be used for predicting the outcome of a new observation. Supervised learning is an essential part of machine learning. sensitive to imbalance data when using linear regression for classification.the predicted value is continuous, not probabilistic.This article explains why logistic regression performs better than linear regression for classification problems, and 2 reasons why linear regression is not suitable: